Introduction

Protect crypto from AI scams – Let’s talk about something that feels like science fiction but is now a very real and very serious problem. You know how exciting it is to be in the crypto world? All the new tech, the potential for big gains, the feeling of being on the cutting edge. But with that cutting edge comes a new kind of risk. The bad guys are now using tools that are so advanced, they can fool your eyes and ears.

I’ll be honest, the first time I saw a deepfake, it was a little unsettling. A video of a famous person saying something totally out of character. It looked and sounded real. But now, scammers are using the same technology to create believable crypto deepfake scams and voice messages to steal crypto. It’s a whole new level of scamming, and it’s built on a scary idea: you can no longer fully trust what you see or hear.

This isn’t about the old, easy-to-spot scams with spelling mistakes and bad grammar. This is about scams that feel so real, they hit you right in your gut. So, let’s talk about how to spot them and, more importantly, how to protect crypto from AI scams. Stay aware, stay smart, and always keep AI crypto security in mind.

Table of Contents

The New Threat: AI and Deepfakes

First, let’s quickly break down what we’re dealing with.

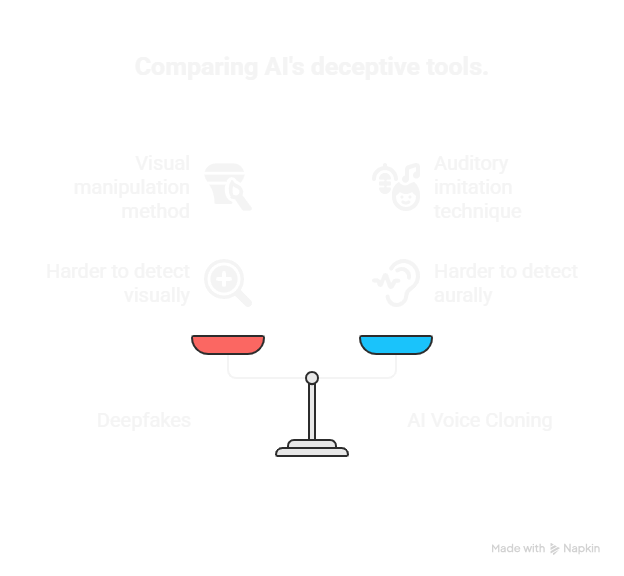

- Deepfakes: This is a fake video or image created using artificial intelligence. The AI learns how a person looks, how they talk, and what their facial expressions are like. Then it can generate a completely fake video of that person saying whatever the scammer wants.

- AI Voice Cloning: This is even scarier. An AI can listen to just a few seconds of someone’s voice and learn to perfectly imitate it. The scammer can then use this clone to make a fake phone call that sounds exactly like a friend, a family member, or a business partner.

I think of it like this: a scammer used to be a bad artist drawing a fake picture. You could usually tell it was a forgery. Now, with AI, the scammer is a master painter who can copy a masterpiece perfectly. It’s much harder to tell what’s real and what’s not.

How the Scams Work (The Latest Tricks)

The old scams relied on urgency and greed. These new ones add a terrifying layer of believability. Here are some of the latest tricks I’ve been seeing.

1. The Fake Founder Livestream Scam

This is a really popular one on YouTube and other social media platforms.

How it works: You see a live stream pop up that looks like it’s from a famous crypto founder, maybe someone like Vitalik Buterin or a well-known influencer. They’re on a live video, looking and sounding just like the real person. They’ll announce a special “giveaway” or a new “investment opportunity” where if you send them some crypto, they’ll double it and send it back. The video is a deepfake, one of the most dangerous examples of crypto deepfake scams. The live chat is full of fake bots saying, “It works!” and “I just got my money back!” to make it seem real.

How to avoid it: This is where you have to be a detective. The golden rule still applies: no one gives away free crypto. But also, look for clues. Does the person’s mouth movement look a little weird? Is the lighting a bit off? It’s interesting to consider that these deepfakes often have subtle glitches. The biggest red flag, though, is the offer itself. A real person would never ask you to send them crypto to “double it.” The only way to protect crypto from AI scams like this is to trust your instincts and never believe in free giveaways.

2. The Friend in Distress Scam (Voice Cloning)

This one is heartbreaking because it preys on your trust in the people you care about.

How it works: You get a phone call from a number you don’t recognize. When you answer, you hear the voice of a family member—maybe your brother or sister—sounding panicked. They’ll say something like, “I’m in trouble, my phone is about to die, I need you to send me some crypto right now!” The voice sounds exactly like them, and you feel the urgency. The call is a fake. The scammer used an AI to clone your family member’s voice from a social media video or a voice note they found online. This is another example of how AI crypto security is becoming more important than ever.

How to avoid it: This is tough, but you have to stay calm. The biggest red flag is the urgency and the unusual request. Never send money just because a voice on the phone asks for it. My advice? Hang up and call the person back on their real, saved number. If you can’t reach them, try to contact them through another friend or family member.

3. The “AI-Powered” Romance Scam

You’ve probably heard of romance scams, but AI is making them even more convincing.

How it works: A scammer meets you on a dating app or social media. They don’t just send generic messages. They use AI to create incredibly personalized and emotionally manipulative conversations. They learn your interests, your hopes, your dreams, and use AI to create a relationship that feels real. The AI might even generate fake photos or videos of the person to keep you hooked. Once you’re emotionally invested, they’ll pitch a crypto investment, and you’ll fall for it because you trust them.

How to avoid it: This one is a long game. The key is to be extremely careful with people you meet online who try to rush a relationship and then quickly bring up money or investments. If something feels too perfect, it probably is. Awareness of crypto deepfake scams and practicing smart AI crypto security are your strongest shields against these manipulative schemes.

My Personal Crypto Security Checklist (Updated for AI)

The old security rules still work, but you have to update them to fight these new AI-powered threats. Here’s my personal checklist, with some new habits I’ve adopted.

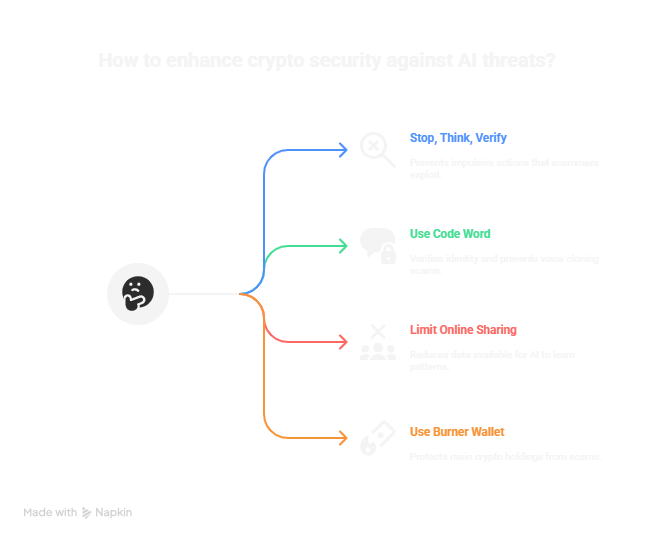

- Stop, Think, Verify. This is the number one thing you can do. Scammers, both human and AI, rely on urgency. They want you to panic and not think clearly. So, if a request is urgent, especially if it involves sending money or crypto, just pause. Hang up the phone. Close the video. Then, verify the request through a different channel. Call the person back on their known number, send them a text, or even send a DM.

- Use a “Code Word” with Friends. This sounds a little over-the-top, but believe me, it’s worth it. Pick a simple “safe word” with your closest friends and family members. Something that’s not public and that you’ll both remember. If you ever get a suspicious call asking for money, you can say, “Hey, what’s our code word?” A scammer with an AI voice clone won’t know it, but your real friend will. It’s a simple trick, but it’s one of the best ways to defeat voice cloning.

- Be Careful What You Share Online. I suspect scammers are scraping all sorts of personal data to feed their AI. The more voice messages you post, the more videos you’re tagged in, the more content you put out there, the easier it is for an AI to learn your patterns. It’s interesting to consider that every voice note you send on a public Telegram group or every short video on your profile could be used to clone your voice.

- Use a Burner Wallet. I still recommend this all the time. Never connect your main wallet, the one with most of your crypto, to a new website. Instead, keep a small amount of crypto in a separate “burner” wallet for new apps and services. That way, if you accidentally fall for a wallet-drainer scam that’s using AI-generated ads, they can only take the small amount in that burner wallet.

Final Thoughts: Common Sense Still Wins

The world of AI is changing everything, and it can feel a little overwhelming. But when it comes to security, the old rule still applies: your best defense is your common sense. The technology scammers are using is advanced, but their tactics are still based on human weaknesses: greed, fear, and a desire to help a loved one.

So, if something feels off, it probably is. If a deal seems too good to be true, it is. Stay safe out there, protect crypto from AI scams, guard your wallet against crypto deepfake scams, and remember that AI crypto security starts with you. Protect your keys like they’re the most important thing you own, and don’t let a convincing fake fool you.